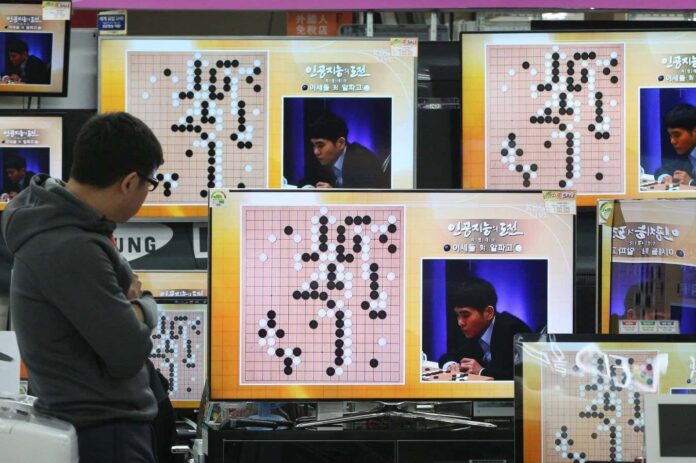

Ten years ago, the world watched in stunned silence as Google DeepMind’s AlphaGo defeated Lee Sedol, one of the greatest Go players in history. This wasn’t just another AI beating a human; it was a watershed moment that revealed a new kind of intelligence. The victory sparked a visceral reaction, with Lee Sedol himself admitting he was “in shock” at the AI’s intuitive and sometimes inexplicable moves.

The Breakthrough: Neural Networks and Intuition

AlphaGo’s success rested on neural networks – complex mathematical models inspired by the human brain. Unlike traditional game-playing programs that rely on pre-programmed rules, AlphaGo learned to play. It did this by analyzing millions of real games and then, crucially, by playing millions more against itself. This self-play allowed the AI to discover strategies beyond human comprehension.

As Sergey Brin, Google co-founder, observed at the time, AlphaGo exhibited “intuition,” making “beautiful moves” that even humans would struggle to conceive. This wasn’t mere calculation; it was a new form of pattern recognition that surpassed human capability. The game of Go, with its staggering 10171 possible positions, provided the perfect test case.

From Go to Real-World Impact

The legacy of AlphaGo extends far beyond the board game. The underlying technology has been applied to critical fields like biology and mathematics. Most notably, AlphaFold, another DeepMind AI, revolutionized protein structure prediction, earning its creators a Nobel Prize in Chemistry. More recently, AlphaProof achieved gold medal performance in the International Mathematical Olympiad, demonstrating AI’s growing aptitude for complex reasoning.

The core principle driving these successes is consistent: feed a neural network vast amounts of data and define clear metrics for success. In Go, it was winning the game; in protein folding, it was accurately predicting molecular structures; in math, it was solving Olympiad-level problems.

The Two-Step Process: Pretraining and Reinforcement Learning

The method behind this success involves two key steps: pretraining and reinforcement learning. First, the neural network is exposed to massive datasets (Go games, the internet, scientific literature). Then, it refines its performance through trial and error. For AlphaGo, this meant playing against itself; for AlphaFold, it meant learning to recognize correctly folded proteins; for large language models like ChatGPT, it means optimizing responses based on human feedback or self-correction.

The Black Box Problem

Despite these advancements, a fundamental challenge remains: neural networks are largely opaque. Even the engineers who built AlphaGo couldn’t fully explain why it made certain moves, like the infamous move 37, which initially seemed irrational but ultimately proved brilliant. This “black box” nature means we often don’t know why an AI arrives at a particular conclusion, raising concerns about reliability and trustworthiness.

As Pushmeet Kohli of Google DeepMind notes, “These models will come up with answers and we will not know whether they are genius insights or hallucinations.” Resolving this remains a core focus of ongoing research.

The Future of AI: Where Data and Definition Reign

AlphaGo’s legacy isn’t just about beating a human at Go. It’s about proving the power of neural networks and laying the groundwork for AI applications that are transforming science and technology. The areas where AI is thriving today—mathematics, programming, and fields with abundant, verifiable data—highlight a key lesson: progress requires both data and clear definitions of success. The next decade promises even more dramatic advancements, but understanding the limitations of these systems will be just as crucial as celebrating their achievements.